background

StreamKit is a web-based application for quickly on-boarding datastreams and analytic capabilities for a national lab. Many analytic capabilities are developed for similar use-cases, though in different programming languages, and for different platforms.

The StreamKit application aims to bring these diverse capabilities together for quick internal validation as well as demonstration to clients. The StreamKit workspace provides a consistent and intuitive interface into complex analytics and disparate datastreams.

In this case study I will focus on one specific analytic for detecting and alerting the user to anomalies within a datastream. Here we explore the specific problem of the initial configuration of the analytic.

process / research

At the time of initial design, the analytic in focus already existed and was proof-of-concept validated on real world data. This provided a unique design challenge in that the developers of this analytic were mostly out of the picture, and our team was implementing the analytic as described in the scientific publication.

To identify algorithmic variables, constraints and results, we first met with the analytics team. A number of complicated issues were identified as integral to consider when designing an interface to the anomaly detection analytic.

Nearly all of the considerations involve the nebulous nature of the analytic inputs and their interaction with streaming data. At the time of configuration, the user may not know what 'anomalous' is in this particular context. Additionally, upon setup the alert may not fire for some time, depending on the nebulous inputs.

process / iteration

The team works on an agile workflow with two-week sprints and regular reviews. Development happens concurrent to design, and features are added, adjusted and removed as appropriate for this use-case.

Fast turnaround of both design and development allow us to quickly try new ideas in context with real data.

process / design review

Initial mockups were intermediately iterated on with both the UI team and the analytics team. During design reviews we verify that requirements are met and that design suggestions are viable.

Design reviews with an in-house SME are also conducted regularly with whiteboard co-design, sketch and high-fidelity prototypes. Feedback from team stakeholders are folded into the prototypes.

solution

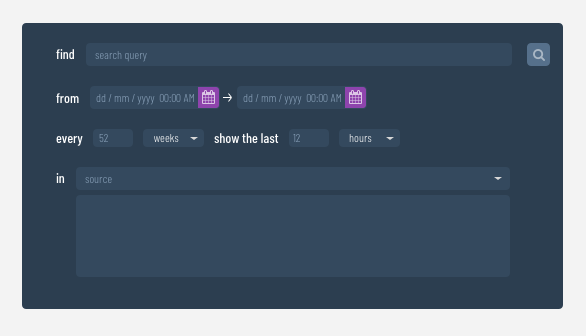

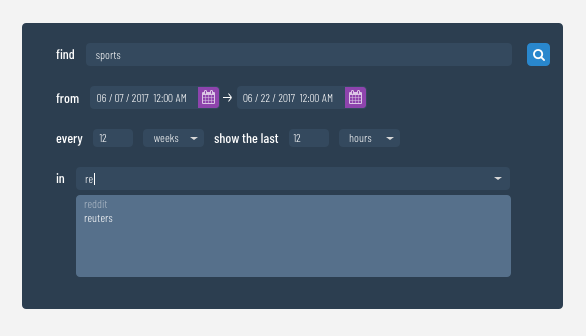

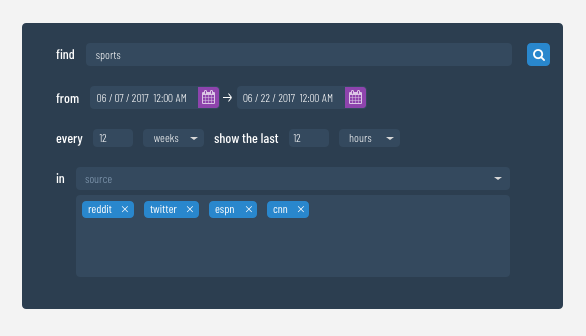

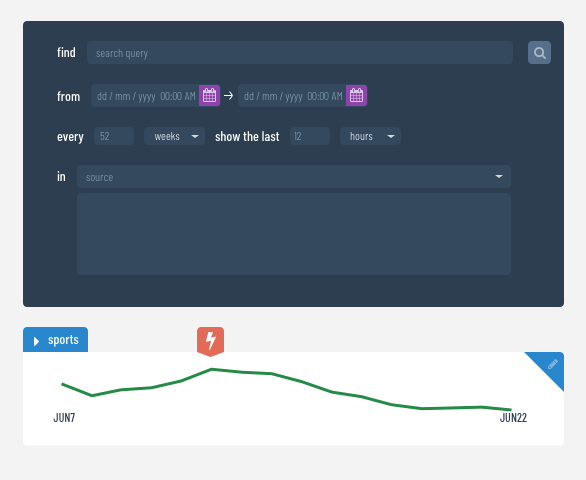

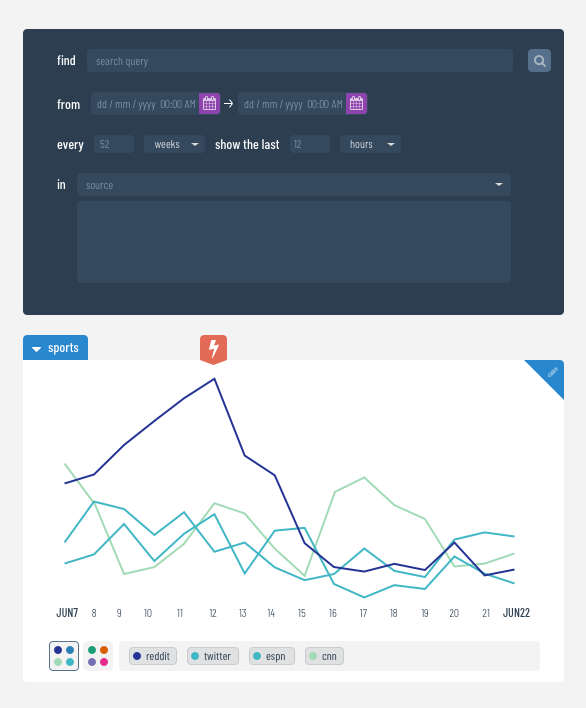

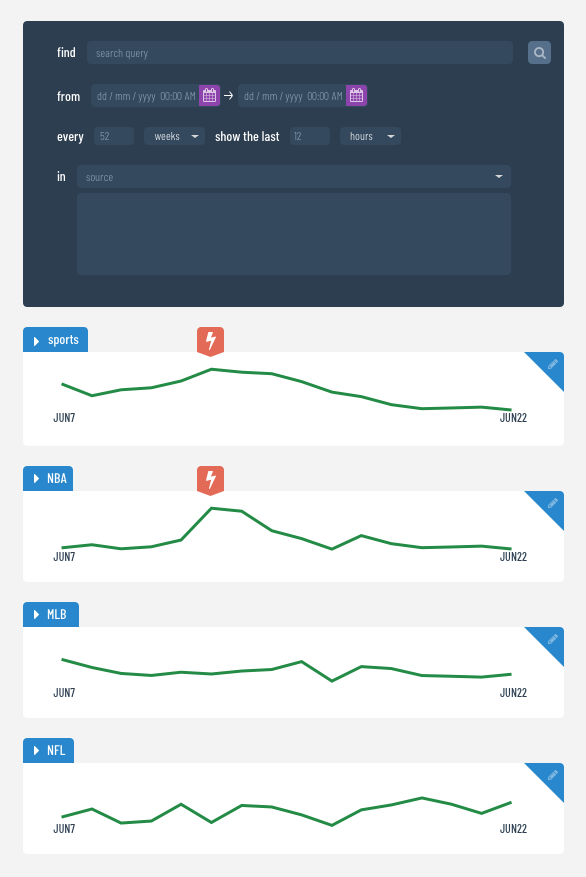

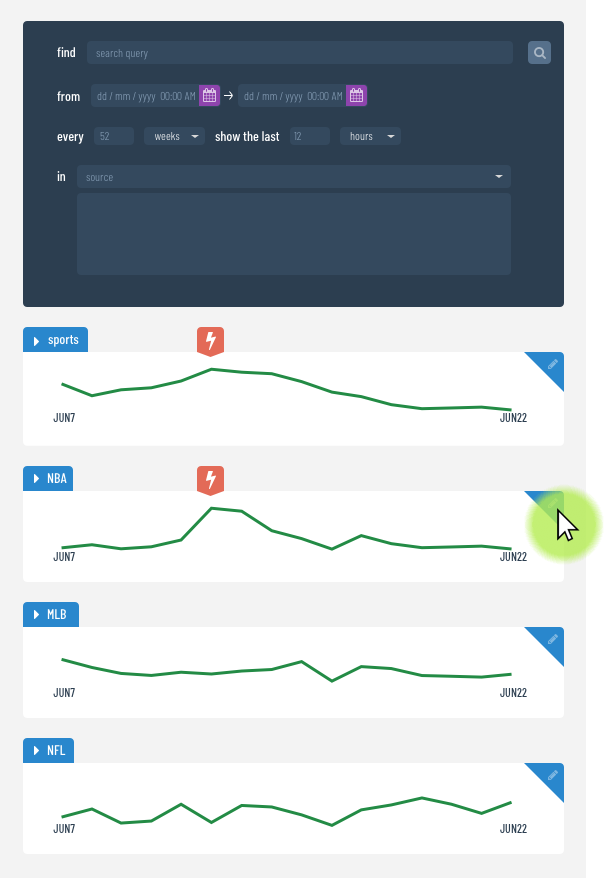

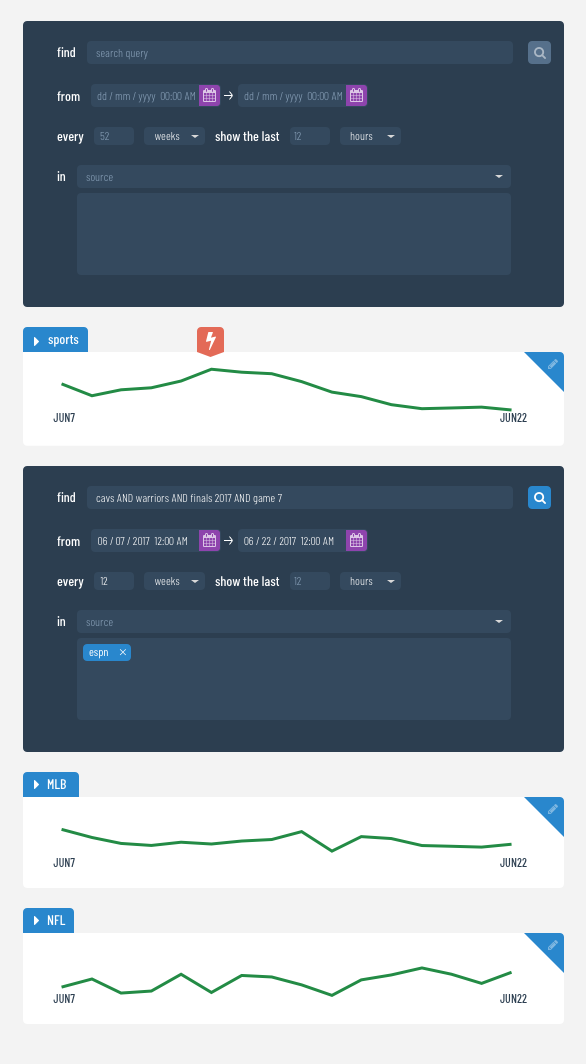

To allow flexibility and ease of exploration to the user, we provide an interface that is intuitive and editable. The conversational design of the initial form helps the user understand how the inputs relate to and constrain each other.

We provide a snapshot of past data on the same time scale annotated with alerts which would have fired. This gives the user an idea of what alerts may be generated in the future with their provided inputs.